AI and Impact: The Good, the Bad, and the Opportunity

- Foundation House

- Oct 27, 2025

- 3 min read

Updated: Oct 29, 2025

Greenwich, CT - October 24, 2025

This blog post reflects discussions held under the Chatham House Rule. While the ideas and information shared are presented here, the identities and affiliations of contributors have been kept confidential to encourage open dialogue.

---

INTRODUCTION

On October 24, Foundation House explored the rapidly evolving world of artificial intelligence and its implications for social impact. The day began with a shared commitment to collaboration, community-building, and continuous engagement by recognizing that progress in the age of AI will require not only innovation but also collective stewardship.

Participants brought a mix of curiosity and caution, reflecting on their personal and professional relationships with AI: its promise, its pitfalls, and its power to reshape how we learn, work, and connect. What followed was a series of dynamic discussions examining the environmental, ethical, and societal dimensions of this transformative technology.

IMPACT

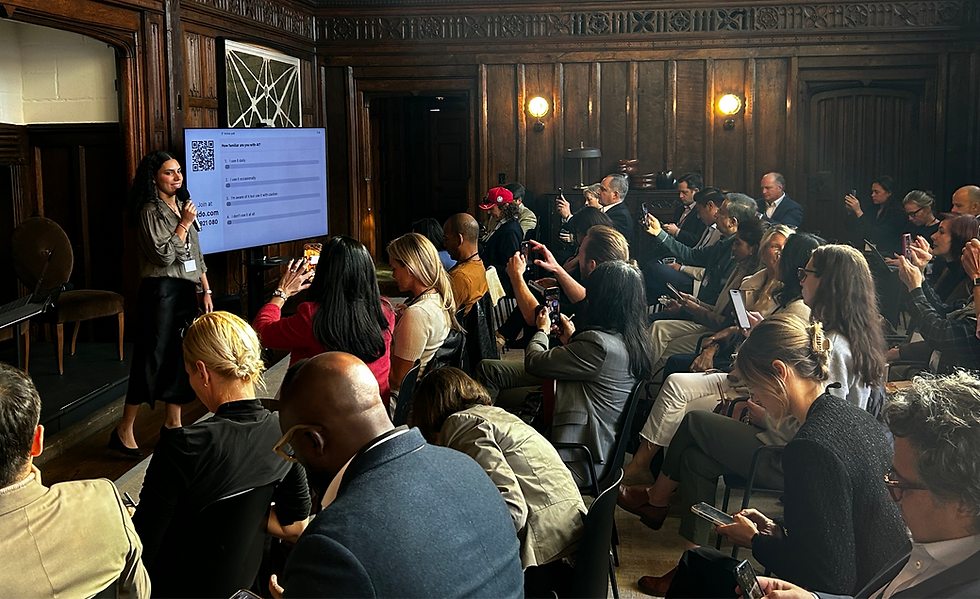

An opening poll revealed that nearly all attendees use AI in some form, with most feeling cautiously optimistic about its role in their lives. Conversations quickly centered on AI’s dual nature: its potential to improve lives through efficiency and personalization, and its risk of deepening inequities or eroding human trust.

Speakers explored how AI is enhancing sectors such as healthcare, education, and mental health. In mental health specifically, AI-powered tools can provide immediate support during moments of crisis, working in concert with clinicians rather than replacing them. Panelists highlighted AI's potential to address both access barriers and administrative burden; clinicians spend up to 40% of their time on documentation, exacerbating workforce shortages. By handling note-taking and routine tasks, AI can free up clinician time for patient care.

Environmental considerations loomed large. Participants noted that AI’s energy demand already exceeds 1.2 billion kilowatt-hours annually, with rising water and land use from data centers. The phrase “compute is the new oil” captured both the scale of opportunity and the urgency of accountability. Still, emerging technologies are improving efficiency, optimizing power and cooling systems that can cut data center energy use by up to 4%, and driving innovation in climate modeling, precision agriculture, and emissions tracking.

Ethics and governance emerged as another focal point. A preview of national research on public attitudes toward AI showed widespread concern about misinformation, job disruption, and safety, alongside uncertainty about who bears responsibility for ethical use. The consensus: meaningful safeguards must come from collaboration between technology developers, policymakers, educators, and communities, not from any one sector alone.

Finally, the “AI and Society” dialogue reframed workforce disruption as both a challenge and an opportunity. Participants discussed the rise of skills-based learning, micro-credentialing, and fractional or entrepreneurial work enabled by AI tools. With accessible AI education, the technology could expand, not contract, economic mobility.

INITIATIVES

Across sessions, participants outlined tangible next steps to maximize positive impact and minimize harm:

Invest in collaboration and community-building, ensuring diverse perspectives are included as AI tools evolve.

Advance ethical AI development by embedding transparency, data sovereignty, and privacy protections, particularly for children and marginalized groups.

Design and deploy AI for good, in areas such as mental health, education, sustainability, and nonprofit work by augmenting, not replacing, human expertise.

Prioritize workforce development, reskilling, and lifelong learning to help individuals adapt to changing job markets.

Address environmental costs through sustainable infrastructure, mindful deployment, and policy advocacy that aligns technology growth with climate goals.

The event concluded with a clear throughline: the future of AI depends on shared values as much as shared code. Participants left with a call to action: to check whether organizations they work at or support have AI policies in place, to help build AI literacy in their communities, and to ensure that those developing and deploying AI represent diverse perspectives. This is not merely a technological frontier but a test of collective wisdom, requiring renewed commitment to responsible innovation, collaborative governance, and human-centered design.

For more details on the speakers and agenda, visit the event page.

Stay in the loop on future events like this.

---

This information is for informational purposes only and does not constitute professional advice of any kind, including but not limited to investment, tax, legal, medical, or mental health. Always consult with qualified professionals for advice tailored to your specific circumstances.